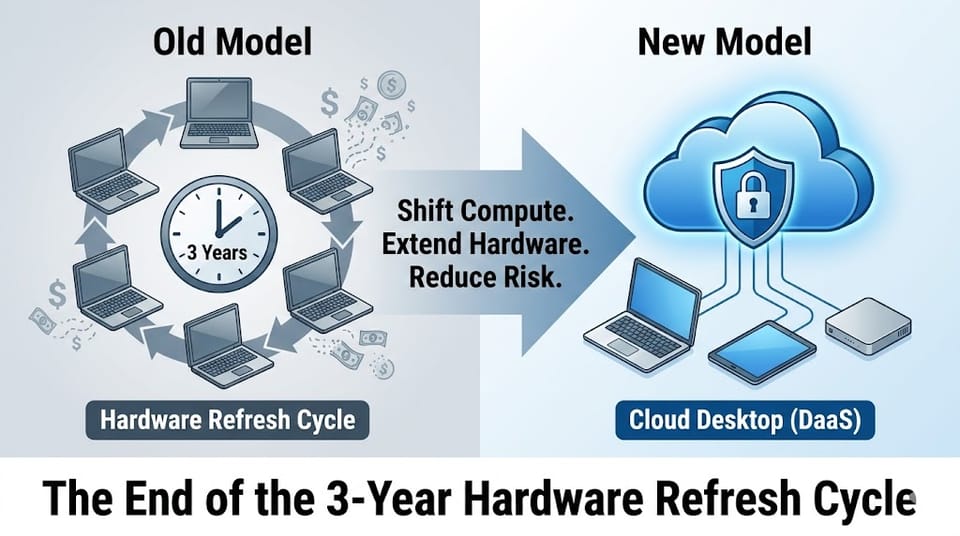

The End of the 3-Year Hardware Refresh Cycle

Why AI Workloads Demand a New Desktop Strategy

For decades, enterprise IT has lived by a simple rhythm:

Buy laptops → depreciate over three years → replace → repeat.

It was predictable. It was manageable. And it worked — when applications were light, storage was local, and performance demands were stable.

That model is now breaking.

Not because devices are worse.

But because applications are fundamentally heavier.

Modern enterprise software is no longer just UI and business logic. It embeds:

- AI inference engines

- Large data pipelines

- Real-time analytics

- Security agents

- Browser-based toolchains

- GPU-accelerated workflows

The result? Endpoints feel “slow” far earlier than their hardware lifecycle suggests.

And organizations respond in the only way they know how: buy more powerful machines.

That approach is financially inefficient, operationally fragile, and strategically short-sighted.

The smarter move is not faster laptops.

It is decoupling compute from hardware.

This is where Desktop-as-a-Service (DaaS) stops being a niche IT tool and becomes an enterprise infrastructure strategy.

The Illusion of Slow Hardware

A common scenario:

A three-year-old enterprise laptop.

16 GB RAM. SSD storage. Still functional.

Yet users complain:

“Apps feel sluggish.”

“Builds take too long.”

“AI tools are unusable.”

IT teams assume the device is obsolete.

In reality, the bottleneck is not silicon — it is workload gravity.

Modern applications are no longer optimized for local execution. They assume:

- Continuous internet connectivity

- Elastic compute

- Server-side acceleration

- Cloud-resident datasets

Running these workloads locally forces endpoints to compete with cloud-scale systems they were never designed to match.

The result: organizations upgrade hardware not because the device failed — but because the architecture failed.

That is not modernization.

That is technical debt disguised as refresh.

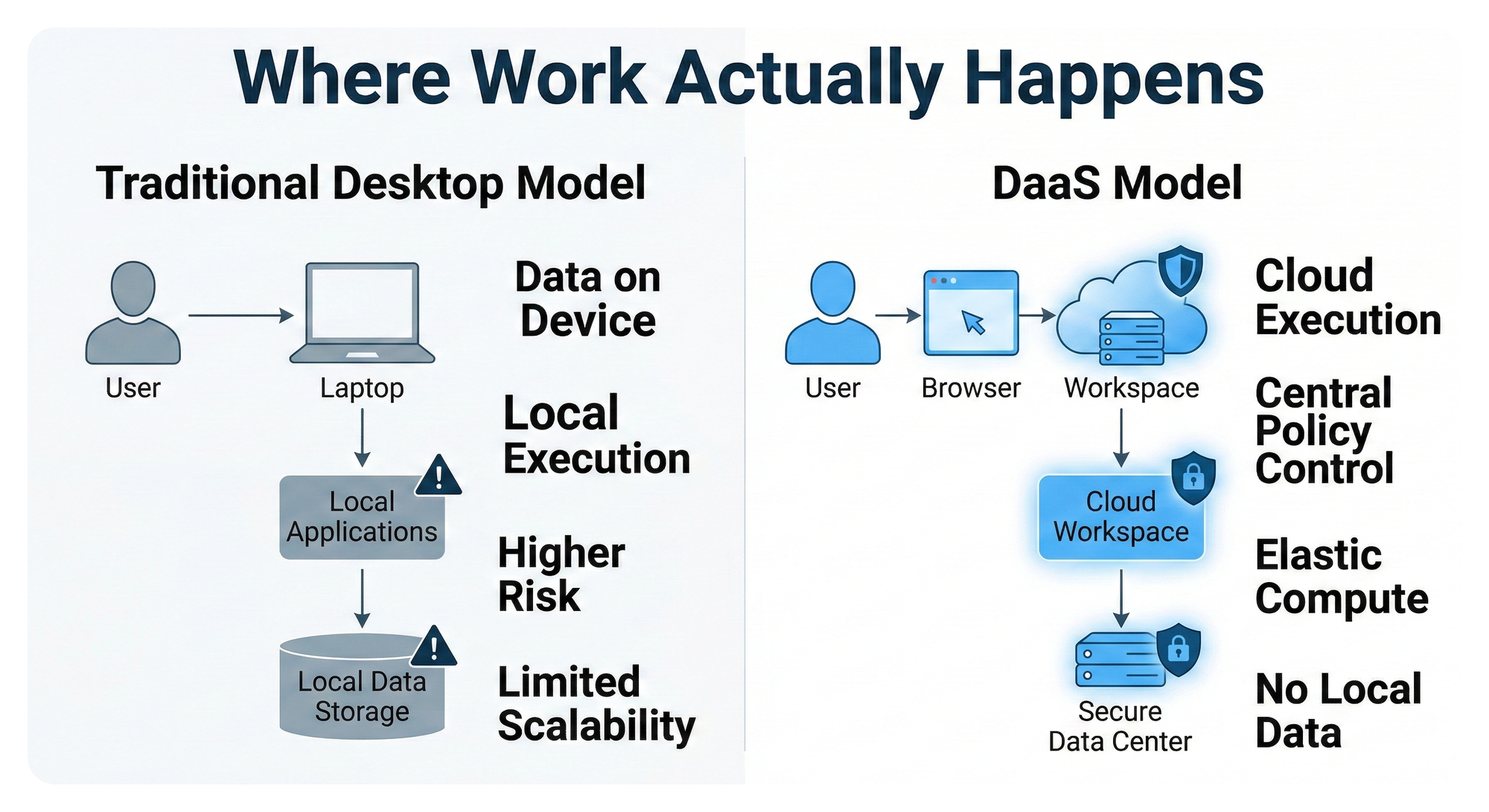

DaaS: A Structural Shift, Not a Cost Trick

Desktop-as-a-Service is often positioned as a virtual desktop alternative. That framing undersells its strategic value.

DaaS changes where intelligence lives.

Instead of:

Powerful endpoint + fragile local environment

You get:

Lightweight endpoint + centralized execution

The endpoint becomes:

- A secure window

- A transport layer

- A display surface

The workspace becomes:

- Cloud-resident

- Policy-controlled

- Centrally patched

- Performance elastic

This single shift unlocks three enterprise-grade outcomes:

- Hardware lifecycle extension

- Capex rationalization

- Security boundary consolidation

Let’s unpack each.

1. Extending Hardware Lifespan Without User Pain

When compute runs in the cloud, the endpoint’s role is dramatically simplified.

It no longer needs:

- High-core CPUs

- GPU acceleration

- Massive local RAM

- Large SSDs

It needs:

- A stable OS

- A browser

- Secure connectivity

This means a laptop from 2022 can deliver the experience of a 2025 workstation — because the performance profile is defined by the cloud, not the device.

For enterprises, this translates to:

- Fewer refresh cycles

- Slower depreciation

- Less e-waste

- Lower procurement volatility

More importantly:

Users perceive improvement without physically changing machines.

That is rare in infrastructure economics.

You are improving experience while reducing capital burn — not trading one for the other.

2. Capex Optimization Through Role-Based Devices

Traditional IT treats all users the same:

High-spec laptop for everyone

“Just in case”

This creates:

- Overprovisioning

- High loss exposure

- Excess inventory

- Inflexible workforce scaling

With DaaS, devices can be mapped to risk and role, not workload.

- Interns: low-cost netbooks

- Contractors: managed browsers

- Frontline staff: thin clients

- Engineers: cloud workstations

Performance comes from the workspace, not the chassis.

This flips the procurement model:

Old way:

Expensive hardware upfront + uncertain usage

New way:

Minimal hardware + elastic cloud compute

From a finance perspective:

- Lower upfront spend

- Predictable operating costs

- Easier scaling up and down

- Reduced asset write-offs

From a security perspective:

- Lost device = lost screen, not lost data

- Zero local intellectual property

- No unmanaged executables

- Centralized session control

This is not just cheaper.

It is structurally safer.

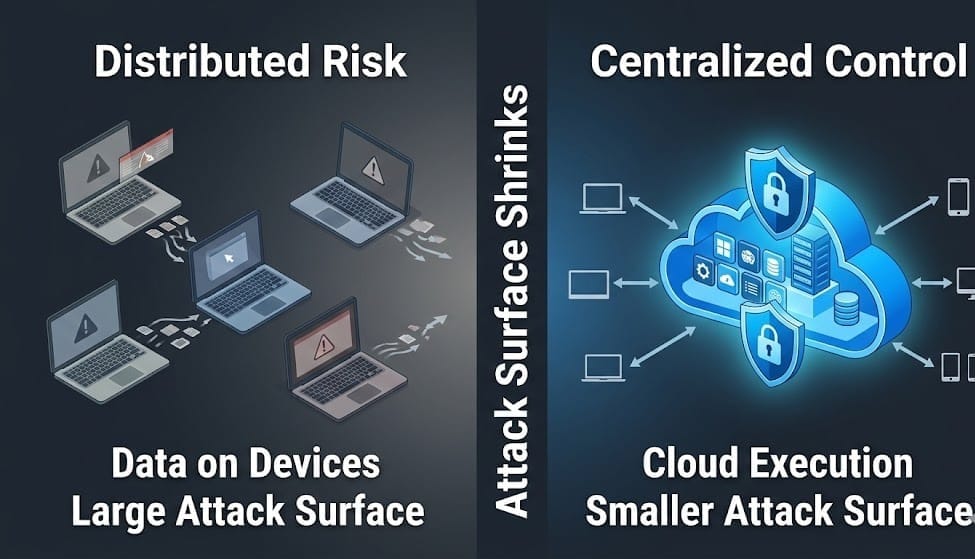

3. Security Boundaries Move Inward

In regulated environments — BFSI, healthcare, pharma, government — the desktop is a major risk surface.

Local execution means:

- Data touches disk

- Memory can be scraped

- Files can be copied

- Malware has persistence

DaaS collapses the attack surface inward.

Now:

- Data never leaves the workspace

- Files remain cloud-resident

- Sessions are ephemeral

- Policies are enforced centrally

The endpoint becomes:

A terminal, not a trust zone

This simplifies:

- Compliance audits

- Incident response

- Device recovery

- Insider threat mitigation

Security shifts from:

Protect every laptop equally

to

Protect one execution plane consistently

That is a massive architectural advantage.

Why AI Accelerates This Transition

AI workloads magnify every weakness of local execution:

- Model size increases

- Memory pressure spikes

- GPU dependence grows

- Latency sensitivity tightens

Trying to satisfy this with endpoint upgrades is a losing game.

You are chasing:

- Faster chips

- Higher thermal envelopes

- More fragile batteries

- Shorter useful lives

Cloud execution scales in the opposite direction:

- Central GPUs

- Shared acceleration

- Pooled memory

- Elastic throughput

An AI-first enterprise cannot rely on hardware refresh cycles.

It requires infrastructure elasticity.

That is not possible when intelligence lives on laptops.

Operational Reality: IT Teams Don’t Want More Complexity

A hidden benefit of DaaS is not financial.

It is operational sanity.

Centralized workspaces mean:

- One image to patch

- One place to monitor

- One policy engine

- One audit trail

Instead of:

- Thousands of local states

- Device-specific drift

- Update timing chaos

- Inconsistent user experience

IT stops managing machines.

IT manages environments.

That is a maturity jump, not a tooling change.

The Strategic Implication for Leadership

This is not about VDI versus laptops.

It is about:

Where does your enterprise compute strategy live?

On:

- Depreciating metal

- Fragmented devices

- User-controlled environments

Or:

- Centralized execution

- Policy-driven access

- Elastic performance

Leaders who continue investing in hardware as their primary performance lever are optimizing the wrong layer.

The modern stack optimizes:

- Execution

- Access

- Control

- Elasticity

Not device horsepower.

How Neverinstall Approaches This Shift

Neverinstall was built for this exact transition:

Browser-native workspaces that abstract away hardware constraints.

Instead of pushing complexity to endpoints, it:

- Centralizes execution

- Delivers through the browser

- Keeps data in controlled environments

- Enables rapid provisioning

- Avoids heavy client installs

This makes it suitable for:

- Regulated industries

- Distributed teams

- Contractors and vendors

- AI-heavy workloads

- Legacy application access

The model is simple:

The device becomes replaceable.

The workspace becomes strategic.

That is how hardware refresh becomes optional instead of mandatory.

Bottom Line

The three-year hardware refresh cycle was designed for a world where:

- Software was light

- Security was perimeter-based

- Compute was local

- Workloads were predictable

That world no longer exists.

Today:

- Applications are heavier

- Threats are internal

- Work is distributed

- AI is compute-hungry

Continuing to solve this with better laptops is tactical thinking in a strategic era.

Desktop-as-a-Service is not an optimization.

It is a realignment.

It aligns:

- Cost with usage

- Security with execution

- Performance with elasticity

- Experience with policy

For enterprises under pressure from:

- Rising capex

- AI adoption

- Security mandates

- Remote work

- Compliance

The question is no longer:

“Should we modernize desktops?”

It is:

“Why are we still tying performance to hardware at all?”

That is the architectural inflection point.

And organizations that cross it early will not just save money —

they will gain operational leverage in an AI-driven economy.